Table of Contents

- Physical Server vs Virtual Machine – The Choice is Open

- What is a Physical Server?

- Types of Servers

- What is a Virtual Machine?

- Types of VMs

- Physical vs Virtual Machine Feature Comparison

- Costs

- Physical Footprint

- Lifespan

- Migration

- Performance

- Efficiency

- Disaster Recovery and High-Availability

- How Do You Choose?

- Physical Server and Virtual Machine Backups

IT infrastructure has changed drastically over the past decade or more. With the rise of virtualization, organizations have shifted the way business-critical workloads are provisioned, managed, and housed in the infrastructure. Instead of configuring a server workload in a 1:1 fashion with one workload per physical server, virtualization has brought about the ability to run many software workloads on a single set of physical hardware.

With advancements in processing, network, and storage power, virtualization has allowed organizations to take advantage of the evolution in CPU processing power across the entire landscape much more efficiently and actually take advantage of the advancements in physical hardware. However, there may be cases where a physical server is still desirable for some workloads.

Let’s take a look at the important differences between a physical server and a virtual machine.

Physical Server vs Virtual Machine – The Choice is Open

When looking at the differences between a physical server and virtual machines and deciding between them to run your business-critical workloads, let’s first get a better understanding of each. We will consider the following:

- What is a physical server?

- What is a virtual machine?

- Physical vs virtual machine feature comparison

- How do you choose?

- Other Considerations

Let’s get started in looking at physical servers.

What is a Physical Server?

For most, the physical server is a well-understood part of the IT infrastructure that has been around since the very beginning. A physical server is a hardware you can touch and feel. A typical server is sometimes referred to as “bare-metal”.

It generally includes all physical hardware components contained in the physical server case that allows it to function. Physical servers typically have a CPU, RAM, and some type of internal storage from which the operating system is loaded and is booted. It may or may not have general-purpose storage outside of the storage used for the operating system.

Your physical connections in the datacenter connect to your physical server. This includes power, network, storage connections, and other peripheral devices and hardware.

When thinking about bare-metal servers that run a single application, these generally provide applications and data for a single “tenant”. In simple terms, a tenant is a customer or consumer. A single tenant is a single instance of the software and supporting infrastructure that serves a single customer. In a single-tenant environment, each customer would generally have their own set of physical hardware dedicated to serving out their particular resources.

Types of Servers

Even though you may think of a physical server as a “one size fits all” type piece of hardware, there are all kinds, sizes, and purposes for physical servers. These include the following different server types:

- Tower Servers – Generally lower cost and less powerful than their rackmount and modular counterparts. Often found in the edge or small business environments where a server rack may not be installed or there is no other rackmount equipment to justify purchasing a server rack

- Rackmount Servers – Rackmount servers are the typical servers you think about when thinking about an enterprise data center environment and are mounted in a standard server rack

- HCI or Modular Servers – These types of servers are sometimes known as “blade” servers or hyper-converged form factors as they typically have the ability to install or scale the compute, storage, and network by simply installing a new “server blade” or “module” into the chassis of the HCI/Modular server

The above different server types are certainly not the only ones you will find available for purchase. However, the above-mentioned types are the most common types of physical form factors that you will find in an enterprise data center environment.

What is a Virtual Machine?

Virtual Machines are arguably the most common type of IT infrastructure found in today’s environments. While containers are certainly gaining traction and are growing in adoption, virtual machines are still currently the de facto standard of today’s virtualized environments.

Virtual Machines are made possible by installing a hypervisor on top of a “bare-metal” server. A common approach for many popular hypervisors today, such as VMware vSphere and Microsoft Hyper-V, is to virtualize the hardware of the underlying physical server and present this virtualized hardware to the operating system. The hypervisor generally has a CPU scheduler of some sort that brokers requests from the client operating systems running in guest virtual machines with the physical CPU installed in the underlying physical host.

Virtual machines provide many advantages over a physical server in terms of provisioning, management, configuration, and automation. While a new physical server may take days or weeks to acquire, provision, and configure, a new virtual machine can generally be spun up in minutes and even seconds in some cases.

Due to the way a virtual machine is abstracted from the underlying physical hardware, this means that it is afforded mobility and flexibility that are simply not possible with physical servers. Virtual machines can seamlessly be moved between different hosts, while the virtual machine is running. Since virtual machines are simply a set of files on shared storage rather than a set of physical hardware, this allows easy mobility and changing of their compute/memory ownership.

We mentioned earlier that a physical server is generally well-suited for a single tenant or customer/consumer. A virtual machine by its very nature is much better suited for multi-tenant environments where possibly many different companies make use of different virtual machines, all located on a physical or cluster of hypervisor hosts.

Types of VMs

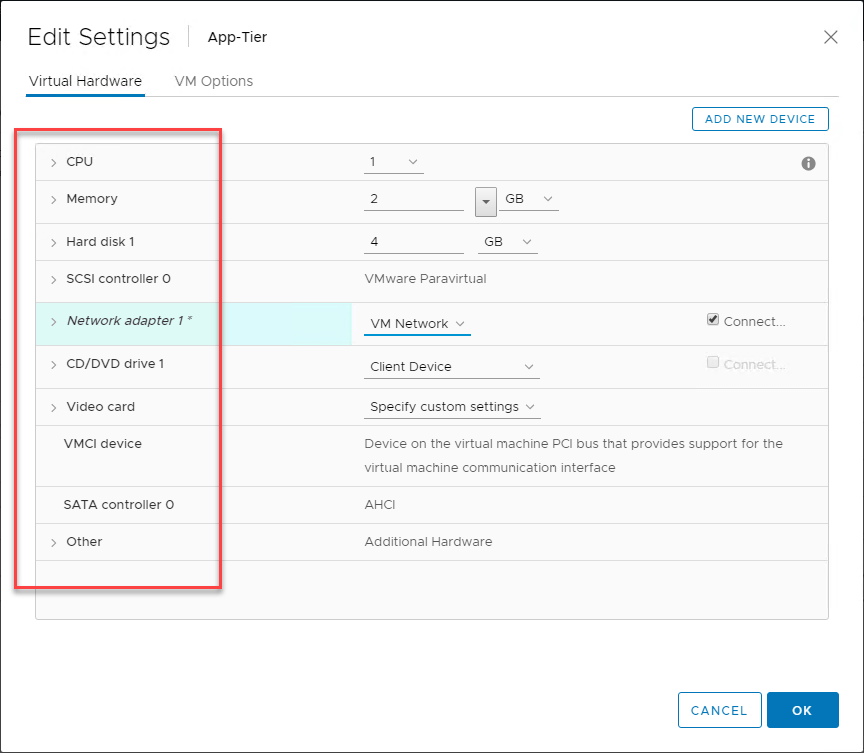

While there is no physical form factor that you can put your arms around for a virtual machine, there is the concept of “virtual hardware” for a VM. Taking VMware vSphere as an example, when you look at the VM settings, you can see the virtual hardware that comprises the virtual machine. This will include at least 1 processor, memory, storage, and network.

Outside of the virtual hardware, there are other types of VMs to make note of:

- Persistent – Generally associated with VDI environments as describing a VM that will not be powered down and destroyed after being used

- Non-persistent – Generally associated with VDI environments as describing a VM that is short-lived in existence, and only provisioned when needed

- Thick provisioned – Describing storage for a VM as having the disk fully committed or “zeroed” out when created

- Thin Provisioned – Thin provisioned disks only zero out the disk as space is needed. This effectively allows “overprovisioning” of storage as you can assign more storage to your VMs than you physically have available

- Virtual Appliances – Virtual appliances in VMware vSphere can be deployed from OVA/OVF templates. This makes provisioning an appliance extremely easy and useful

- vApps – A vSphere concept that allows logically grouping virtual machines together so they can be managed and administered as a single entity

- Generation 1 – In Hyper-V, this is the legacy VM configuration. The “generation” generally affects the VM’s capabilities and features. Generation 1 VMs are usually limited in their features when compared to generation 2 VMs

- Generation 2 – The newest type of VM configuration in Hyper-V that affords all the latest features and capabilities

Physical vs Virtual Machine Feature Comparison

While physical servers and virtual machines are very different in the way they are constructed, they do share similarities. When it comes down to connecting to a “physical server” vs a “virtual server”, the experience from a client perspective is going to be the exact same. Applications generally do not care if they are connecting to a physical server or if they are connecting to a virtual machine as virtual machines run the same operating systems that are run on physical servers, including Windows Server and Linux.

As long as the resources that are needed are presented by either a physical server or a virtual machine, an application can perform the same, regardless of whether or not the server is physical vs virtual. What about comparing physical servers and virtual machines in other ways? Let’s take a look at the following comparisons.

- Costs

- Physical footprint

- Lifespan

- Migration

- Performance

- Efficiency

- Disaster Recovery and High Availability

Costs

Even though the cost of physical hardware has come down considerably when you look at the processing power you get for the dollar, physical hardware is still expensive. Depending on the specs of the hardware that is provisioned, costs can be a few thousand dollars to tens of thousands of dollars for a single physical server.

Looking at the cost of a virtual machine can be a more abstract exercise since you can literally create as many VMs on top of a physical host running a hypervisor as the hardware can support. However, there are “costs” associated with VMs since they essentially take a “slice” of the hardware specs and performance the physical host is capable of and that you paid for when purchasing the hardware.

Products like VMware’s vRealize Operations Manager have the ability to run continuous cost analysis based on processors allocated, RAM, and storage consumed. This can be helpful to have tangible information regarding the costs of your individual VMs.

When it comes to a 1 to 1 comparison however, of physical server hardware for (1) workload compared to the ability to run many instances or workloads on top of a physical hypervisor host, VMs are a much more cost-effective and efficient use of your physical resources in the enterprise data center.

Physical Footprint

When you look at the physical footprint of a physical server, it can certainly be extensive. Whether it is a tower, rack, or blade type chassis, space will be required to accommodate the physical form factor of the server. If you think about literally having a physical server for each workload running to service a single solution, application, or set of users, the physical space required can add up.

Virtual machines on the other hand allow what is known as server consolidation. Over the past decade or more, many organizations have been undergoing this transformation from having a 1 to 1 physical server relationship with a single application to virtualized environments that can run 10, 20, 50, or more VMs per physical hypervisor host.

VMs are certainly a more efficient use of physical space in the enterprise data center when compared to physical servers each running a single workload.

Lifespan

The lifespan of a physical server compared to a VM can be an interesting comparison. The general lifespan of physical server hardware in most enterprise environments ranges anywhere from 3-5 years. This means that workloads running on top of the physical server hardware needs to be migrated off after that lifespan has been reached.

Since virtual machines are abstracted from the underlying hardware of a physical server, virtual machine lifespans can be much longer than the physical hardware on which they reside. After the lifespan has been reached for the underlying hypervisor host, a new hypervisor host can be provisioned in parallel with the current host and the VMs can be migrated over seamlessly. After this, the old physical hypervisor hardware can be decommissioned.

On the other side of the coin, with strong automation capabilities, virtual machines can be provisioned ephemerally and spun up and down as needed. A classic example of this is non-persistent VMs that are provisioned in a VDI environment as needed. After a user logs off, the non-persistent VM is destroyed.

Migration

When comparing the migration possibilities with physical hardware vs virtual machines, physical server migration is much more difficult. Migrating a physical server to new physical hardware involves many more complexities than a virtual machine. With physical server migration to new hardware, there are a couple of options.

- Take an image of the physical server and apply the image to new hardware

- Migrate the software from the old physical server to a new physical server

Option 1 requires the least effort. However, this option may be the most problematic in terms of drivers and other challenges with the image containing hardware references to the old physical server. This approach can result in bluescreens or hardware issues after the image is applied. A maintenance period would be required and the application(s) hosted by the physical server would incur an outage during that period.

Option 2 can require the heaviest lifting since migrating software/applications to a new server can be complicated, depending on the software/application. A maintenance period would most likely be needed for migrating software/applications from one physical server to another.

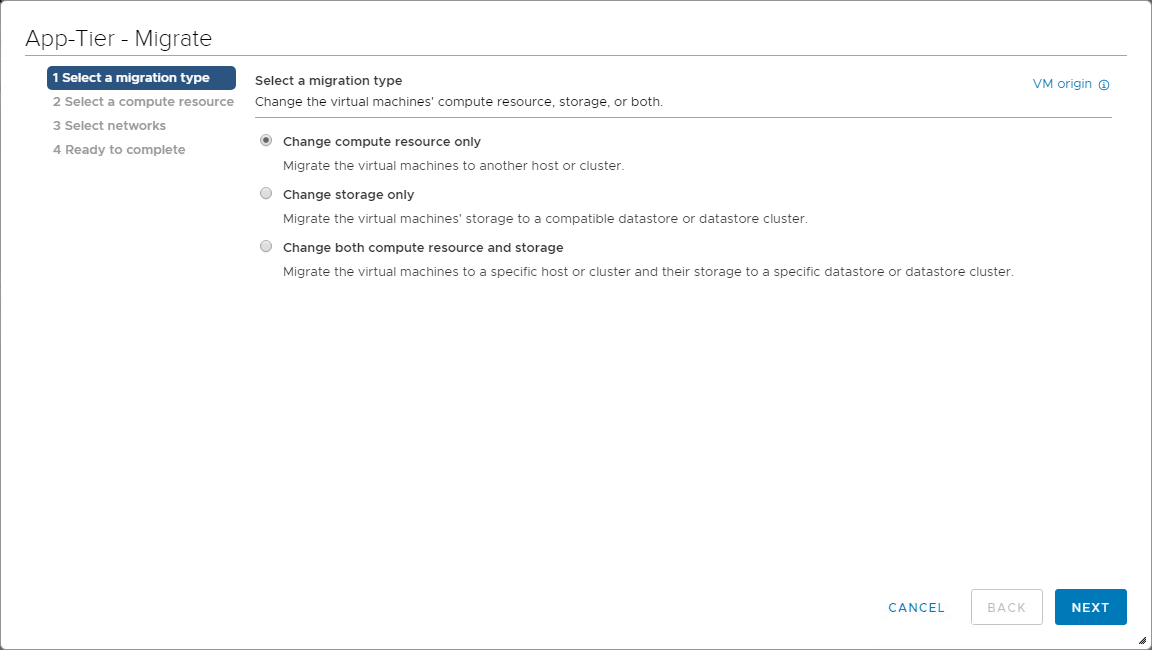

By comparison, virtual machine migration is much easier. Due to the fact that virtual machines are abstracted from the underlying physical hypervisor host hardware, migrating to new hypervisor hardware is a simple hypervisor-level migration process. This would be a VMware “vMotion” or a Microsoft Hyper-V “Live Migration” process to move to new hardware in the case of those hypervisors.

The great thing about the hypervisor level migrations enabled by the likes of vMotion or Live Migration is they can be done while the VM is running which means your application can remain available during the process! Migrations are certainly an advantage of virtual machines compared to physical server migrations.

Performance

Performance is an area where physical servers (bare-metal) typically shines. In fact, one of the most common use cases still seen for having a physical server as opposed to running a virtual machine is the requirement to have the absolute most performance available for a business-critical application. Virtualized environments have a small bit of overhead related to the hypervisor.

However, it needs to be noted that the gap between VM performance and bare-metal performance has grown very narrow as hypervisor schedulers have grown very good at scheduling CPU time. For most, running on physical server for performance reasons may result from the need to have absolutely no contention for resources from other VMs that may compete for those resources on the same physical hypervisor host hardware.

Efficiency

Efficiency is certainly an advantage of running virtual machines over a physical server for a single workload. When you look at the cost for powering a physical server, cooling, and the cost per “rack-U” of data center space, running physical servers to host applications and workloads as opposed to VMs becomes very expensive.

When you can run multiple, even tens of VMs per hypervisor host, instead of a single workload per physical server, VMs are much more efficient in orders of magnitude compared to physical servers.

Virtual machines have effectively allowed organizations to successfully consolidate the footprint of their data centers drastically. This has resulted in power/cooling/space savings across the board.

When looking at resource efficiency, using physical servers for single workloads will result in a great deal of wasted idle resources. Virtual machines allow actually using the available CPU cycles, memory, and storage capacity fully.

Disaster Recovery and High-Availability

Running any business-critical workload, either on physical server hardware or virtual machines requires that you have a way to protect your applications and data from disaster and also ensure the application and data are available. Virtual machines certainly have a definite advantage when compared to running workloads on physical servers in terms of DR and HA.

As mentioned, virtual machines are abstracted from the underlying physical hardware. This makes them extremely mobile in terms of being able to be moved to a different hypervisor host or physical location altogether. This opens up several capabilities when it comes to protecting applications and data in disaster recovery scenarios.

With virtual machines, VM snapshots/checkpoints can be leveraged for redirecting I/O so that all changed data can be captured by backup solutions. Changed Block Tracking/Resilient Change Tracking can be used to only capture the changes that have been made since the last backup.

In addition, backups of virtual machines at the hypervisor level result in a total backup of everything required to restore the VM to a functioning state, including the virtual hardware configured.

With physical server backups, at best, you can capture the operating system and all data stored within the server. However, the physical hardware cannot be magically duplicated. If you have a physical server failure, you will have to reproduce compatible server hardware to restore your backups.

Virtualization clusters also make high-availability very easy. By abstracting the hardware from the virtual machine, the VMs can easily run from any hypervisor host in the cluster. If a hypervisor host fails, ownership of the VM can simply be assumed on a different hypervisor host in the hypervisor cluster.

Physical servers can be clustered as well. Windows Server Failover Clusters have long been the standard in the enterprise data center for clustering physical servers together to ensure high-availability at an application/data perspective. If the master node fails, another physical server in the cluster will assume running the application/hosting the data.

Virtual machines allow the simplest means of protecting your data at a site-level. Virtual machines can easily be replicated across to a different environment housed in a separate location like a DR facility. Without the right data protection solution, physical servers can certainly be more challenging to protect at a site-level.

How Do You Choose?

The decision most are making between physical servers vs virtual machines has been clearly identified with the widespread adoption of virtualization. For most, the advantages that virtual machines offer in terms of cost, physical footprint, lifespan, migration, performance, efficiency, and disaster recovery/high-availability are far greater than running a single workload on a single physical server.

Does this mean that running applications and hosting data on physical workloads are not an option you would ever choose? No. Physical servers are still very much a part of the enterprise data center environment. There are still situations and use cases for running an application on a physical server. Whether it is for performance reasons, or perhaps the need to hook physical devices into a physical server, the use cases certainly exist.

The choice comes down to both a technology and business decision for your organization. In most environments, the majority of workloads will be virtual machines and containers, with a small number of physical servers running various applications.

Physical Server and Virtual Machine Backups

No matter if you are hosting your data and applications on physical servers or virtual machines, you must protect them. Physical servers and virtual machines can both fail. This underscores the need to properly protect your data and applications. Having a unified data protection/backup solution that can protect both physical and virtual workloads simplifies disaster recovery.

With BDRSuite, you can have an all-in-one solution that can protect your physical servers and virtual machines running in your environment. Vembu allows you to treat physical servers like VMs since the backups allow P2V’ing physical servers for restoration in a disaster. Additionally, it allows easily copying the physical server backups offsite along with your virtual machines. This includes the following capabilities for both physical and virtual machines:

- Changed Block Tracking

- Automated Backup Verification

- Quick VM Recovery

- Offsite or Remote Backup Copy

- Vembu Universal Recovery

- Application-aware backups

- Migration support – V2V, P2V, V2P

Download the 30-day free trial of BDRSuite here.

Follow our Twitter and Facebook feeds for new releases, updates, insightful posts and more.