One of the key components when it comes to your infrastructure data is storage. Especially when it comes to virtualized environments, storage is one of the key components that makes virtualization possible. There are many different types of storage from the more traditional architecture to next-generation, software-defined storage architectures that are making waves in the world of virtualization.

When it comes to Microsoft Hyper-V, there are many different flavors of storage to choose from. These range in capabilities, complexities, and costs, as well as technical differences.

Table of Contents

- Why Data is Important

- Connecting Hyper-V Hosts to Storage Infrastructure

- Direct Attached Storage

- Shared Storage

- Cluster Shared Volumes

- Storage Spaces Direct

- ReFS

- Configuring Hyper-V Direct Attached Storage

- Considerations for Direct Attached Storage Locations

This blog on Hyper-V Storage Configuration is a three-part series. We will cover a number of different storage configurations with Microsoft Hyper-V, including their characteristics, features, configuration, and use cases.

- In this first part, we’ll get started with an overview of Hyper-V related storage technologies – Direct Attached Storage, Shared Storage, Cluster Shared Volumes, Storage Spaces Direct & ReFS. We’ll also look at the process of configuring Hyper-V Direct Attached Storage.

- In the second part, we’ll discuss the process of configuring Hyper-V Shared Storage and the process of configuring Cluster Shared Volumes for Hyper-V

- In the third part, we’ll look at the process of configuring Storage Spaces Direct and Resilient File System (ReFS)

Let’s get started with a brief overview of the importance of data and then discuss the various storage technologies.

Why Data is Important

Before taking a look at the various types of storage that can be connected to Hyper-V hosts, let’s take a step back to being with and think about why data is important. When you think about the most important reason that any IT infrastructure exists, it is to serve out or allow access to data of some sort. After all, customers, end-users, and others do not traverse the Internet or any business systems simply for the ride. There is always data at play somewhere in the chain of network communications or server resources that is either being accessed, sent, consumed, or saved. Data is the heart of IT infrastructure.

Generally speaking, the other components of the virtualization infrastructure including compute and network serve the purpose of facilitating data access in some form or fashion. The storage infrastructure is the aspect of virtualized environments that is directly responsible for the data housed in the system. The hypervisor is responsible for brokering access to and from the guests to the storage infrastructure.

Data has been described as the “modern gold” of the Internet and future revenue. Businesses today are capturing massive amounts of data at scales that were unimaginable just a few years back. Due to the upsurge in machine-learning technology and capabilities, organizations can analyze and make useful extrapolations from the data that is collected, furthering business interests.

Without diving too much further down the rabbit hole of data collection and machine-learning, the bottom line with data is that it is crucially important. This is what is driving today’s businesses that are making use of technology. Virtualization administrators must understand the nuances of how data is stored, accessed, best-provisioned, and backed up.

Without further ado, let’s take a look at the different options for connecting Hyper-V hosts to the storage infrastructure and the advantages and disadvantages of each method and technology.

Connecting Hyper-V Hosts to Storage Infrastructure

There are various technologies that are driving the storage front when it comes to the Hyper-V hypervisor. Some of these have come about in recent versions. This is certainly the case on the front of software-defined storage.

Let’s take a look at the following ways of connecting Hyper-V hosts to storage infrastructure as well as some of the ancillary storage technologies that extend the capabilities of Hyper-V storage. We will take a look at the following Hyper-V related storage technologies and how they weigh into the storage strategy for your data:

- Direct Attached Storage

- Shared Storage

- Cluster Shared Volumes

- Storage Spaces Direct

- ReFS

Direct Attached Storage

When looking at the options for Hyper-V storage, Direct Attached Storage or DAS is the simplest and easiest storage solution to implement for virtual machine storage. Additionally, DAS is generally the cheapest type of storage that can be utilized when compared to other options involving SAN storage since there are way fewer complexities and components involved with DAS storage.

Direct Attached Storage or DAS is storage that is “directly attached” to the Hyper-V host. DAS storage is generally utilized with single standalone Hyper-V hosts. Direct Attached Storage generally is storage that physically resides within the chassis of the Hyper-V host itself as internal disks. However, there is the possibility to configure “external” direct-attached storage by means of a Serial Attached SCSI (SAS) connection to an external storage device configured with “just a bunch of disks” JBOD where Hyper-V manages this configuration directly or one that is configured with RAID configured on the enclosure for parity purposes.

***Note*** Storage spaces direct use direct-attached storage, however, it is “shared” between the other hosts in a Windows Server Failover Cluster running Hyper-V using a software-defined approach. We will take a look at Storage Spaces Direct shortly.

Shared Storage

Moving up into the realm of shared storage opens up a lot of new possibilities when it comes to the high-availability of your data and the mobility of your virtual workloads. To create a Windows Failover Cluster that runs Hyper-V, the Windows Failover Cluster needs to have shared storage for the cluster to work together in hosting production workloads. Basically, all of the advanced features and functionality of the Hyper-V hypervisor requires shared storage.

As mentioned, high-availability is one of the reasons you run a Windows Failover Cluster to begin with. The Windows Failover Cluster with shared storage running Hyper-V virtual machines is able to restart virtual machines on a healthy host if the host in which the VMs were running fails. Shared storage also unlocks the possibilities of performing maintenance without any downtime. VMs can be migrated from one host to another to perform patching or other host maintenance that requires taking the host down for a period of time.

The traditional approach to shared storage generally involves provisioning storage on a Storage Area Network or SAN and attaching the storage to the Hyper-V hosts by using storage protocols such as iSCSI or NFS. The storage is provisioned on the storage appliance, mounted to the Hyper-V hosts, and multiple connections or multipathing is configured from all hosts to allow simultaneous access to the storage.

In order for Hyper-V hosts to all have simultaneous access to the storage volumes, a new type of shared volume was introduced in Windows Server 2008 R2 called Cluster Shared Volumes or CSVs. What are CSVs?

Cluster Shared Volumes

Previous to Windows Server 2008 R2, simultaneous reads and writes from all Hyper-V hosts in the cluster for a particular volume was not possible. However, CSVs are a special kind of shared volume that can be formatted as either NTFS or ReFS (more detail on this later) that allows simultaneous read and write operations from all nodes in the Windows Failover Cluster.

CSV is a general-purpose, clustered file system that is layered above NTFS or ReFS that allows for clustered virtual machines and scale-out file shares to store application data. A CSV is able to get around the locks on the file system by utilizing metadata that is updated by a coordinator node in the Windows Failover Cluster that owns the LUN.

Storage Spaces Direct

Storage Spaces Direct is perhaps the most exciting development in Hyper-V storage in recent versions. With Windows Server 2016, Storage Spaces Direct or S2D was introduced as Microsoft’s introduction into true software-defined storage running on commodity hardware. As a direct competitor to VMware’s vSAN technology, S2D allows customers to run a software-defined storage architecture that provides tremendous flexibility in provisioning, management, and scalability.

Using Storage Spaces Direct, customers can provision shared storage across nodes in a Windows Failover Cluster running commodity hardware. This is configured using direct-attached storage in all the cluster nodes. Using a cache tier and a capacity tier, the software-defined storage is able to provision these tiers of storage to contribute to the overall software-defined storage location.

With Windows Server 2019, Microsoft has greatly improved the capabilities of Storage Spaces Direct and has introduced many new and exciting features. These include the ability to have ReFS and deduplication together at last, simplified architecture, “True two-node” cluster architecture utilizing a USB drive, and better visibility to drive health and performance.

ReFS

ReFS or “Resilient File System” is another storage-related technology that is continuing to extend the features and capabilities of the file system when used in conjunction with Hyper-V. Resilient file system is a self-healing file system that is highly resilient to corruption. It also provides tremendous performance benefits with the “block cloning” capabilities that it contains. As the ReFS file system matures, it will no doubt be the most heavily adopted file system, especially when used with Windows Failover Clustering and Hyper-V.

Now that we have an overview of the various storage technologies commonly used with Hyper-V, let’s take a deeper dive into the configuration of the various storage technologies, requirements, and other considerations.

Configuring Hyper-V Direct Attached Storage

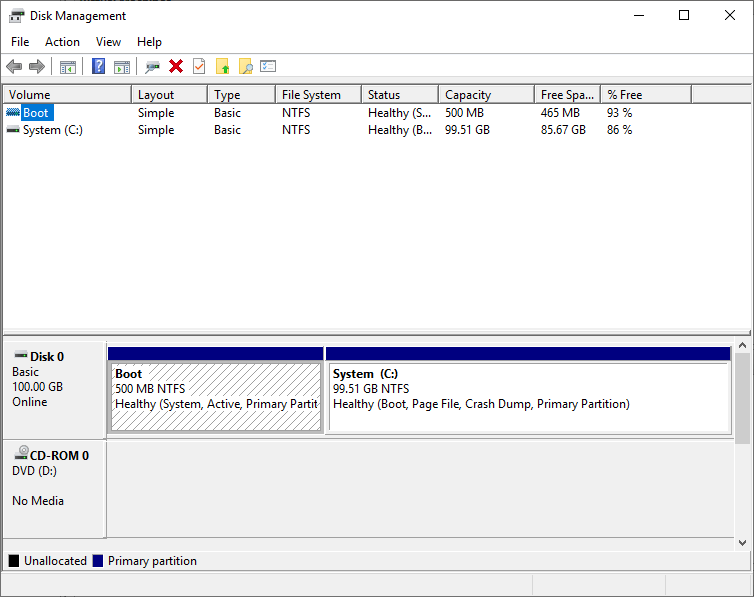

As mentioned earlier, Direct Attached Storage is generally the simplest form of storage that can be provisioned in a Hyper-V host. By default, as soon as you load a Windows Server with Hyper-V, you have Direct Attached Storage, even if you have a single drive. This is because Hyper-V will by default configure virtual machine storage to be contained on the same drive with Windows.

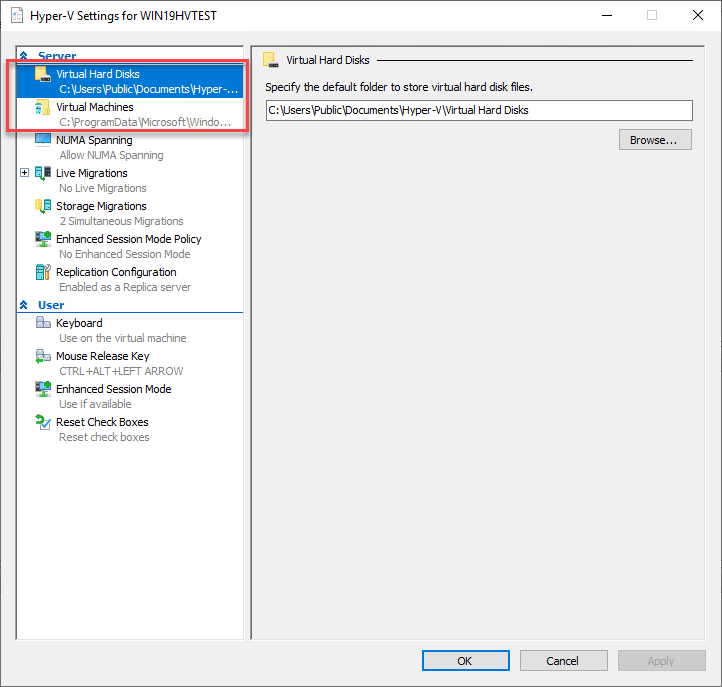

- The Hyper-V Virtual Machine configuration files are stored by default in the “C:\ProgramData\Microsoft\Windows\Hyper-V” directory.

- The Hyper-V Virtual Machine hard disk files are stored in the “C:\Users\Public\Documents\Hyper-V\Virtual Hard Disks” directory.

Outside of the default directories that are configured using Direct Attached Storage by way of the operating system volume, adding Hyper-V Direct Attached Storage is simple as adding a new volume with free space, formatting the volume, and then configuring your Hyper-V configuration to make use of the new storage for configuration and virtual hard disk files.

Considerations for Direct Attached Storage Locations

As a Hyper-V best practice, if you are going to use Direct Attached Storage for production, you will want to change the default virtual machine storage location. Even if you are using a standalone Hyper-V host, it is a better idea to have direct-attached storage that is dedicated to virtual machine storage. This is desirable from a disaster recovery standpoint as well as from a performance perspective. This will allow the I/O to be dedicated to virtual machines if a dedicated drive is used for VMs.

There may be cases where very lightly used standalone hosts may be provisioned with a single volume and VMs hosted on the operating system drive as it is configured by default by Hyper-V. Going along with the fact that if a single Hyper-V host is utilized, you are relying on disaster recovery as there is no high-availability for the Hyper-V host itself.

In the next post, we’ll look at the process of configuring Hyper-V Shared Storage and the process of configuring Cluster Shared Volumes for Hyper-V in detail.

Related Posts:

Hyper-V Storage Best Practices

Best Practices for Hyper-V storage and Network configuration

Beginner’s Guide for Microsoft Hyper-V: Hyper-V Storage Best Practices and Configuration – Part 10

Follow our Twitter and Facebook feeds for new releases, updates, insightful posts and more.