Quick Bites:

NIC teaming in Hyper-V refers to the process of combining multiple network interface cards (NICs) into a single logical NIC, also known as a team or virtual NIC. The goal of NIC teaming is to provide improved network performance, availability, and redundancy.

When multiple NICs are teamed together, the traffic can be distributed across them, reducing the load on any single NIC and increasing overall throughput. In addition, if one of the NICs fails, the team can automatically redirect traffic to the remaining NICs, ensuring that network connectivity is maintained.

Hyper-V supports two types of NIC teaming: Switch Independent and Switch Dependent. In Switch Independent mode, the team uses the built-in Hyper-V switch to distribute traffic across the member NICs, while in Switch Dependent mode, the team relies on an external switch to handle the traffic distribution.

NIC teaming can be configured using either the Hyper-V Manager or Windows PowerShell. It is important to note that not all NICs and network adapters are compatible with NIC teaming, so it is important to check the documentation of your hardware and software before attempting to configure a team.

Table of Contents

- Windows Server Native NIC Teaming

- Windows Server NIC Teaming Overview

- Configuring a Windows Server 2016 NIC Team

- Hyper-V Specific NIC Teaming Considerations

- Concluding Thoughts

One of the critical components of Hyper-V configuration is setting up network connections. This includes the provisioning of network adapters, cabling, virtual switches, traffic flows, and uplinks. If the Hyper-V network configuration is improperly set up, problems can ensue from the beginning. This can materialize into performance or even availability or stability issues.

Hyper-V includes robust network capabilities right out of the box that allows administrators to configure Hyper-V for a number of use cases and configurations. One of the native Windows Server and even Hyper-V features is the ability to create NIC teams. Teaming NICs has long been a construct that has provided increased network capacity for Windows Server environments as well as fault tolerance for network connections to Windows Servers. However, with recent versions of Windows, Microsoft has provided these capabilities natively.

In this post, we will discuss the following:

How do Hyper-V administrators benefit from NIC teaming in Hyper-V? How is this configured? What are the use cases?

Windows Server Native NIC Teaming

In legacy versions of Windows, many Windows Server administrators relied heavily on third-party network card vendors to provide their customized Windows driver that enabled the functionality for teaming. This was often problematic for Windows Server administrators as it required thoroughly testing new driver releases, or battling problematic driver releases that caused network issues related to teaming. Additionally, the third-party drivers meant that for support, you had to contact your network card vendor if you run into any issues.

Since Windows Server 2012, Microsoft has included the ability to create network teams natively in the Windows Server operating system. This solves many of the problems administrators had with third-party drivers and utilities used to create the network card teams used in production. Microsoft supports the native teams created with Windows Server native teaming and generally, the teaming provided with Windows Server has proven to be a much more stable mechanism for creating production network teams.

Windows Server NIC Teaming Overview

Microsoft’s native NIC Teaming allows grouping between one and thirty-two physical Ethernet network adapters into one or more software-based virtual network adapters or teams. This provides fault tolerance and increased performance for production workloads. A few items to note regarding Windows Server NIC teams:

- May contain 1-32 physical adapters

- Aggregated into Team Nics or tNICs which are presented to the OS

- Teamed NICs can be connected to the same switch or different switches as long as they are on the same subnet/VLAN

- Can be configured using the GUI or PowerShell

NICs that cannot be placed into a Windows Server NIC team:

- Hyper-V virtual network adapters that are Hyper-V Virtual Switch ports exposed as NICs in the host partition

- The KDNIC – kernel debug network adapter

- Non-Ethernet network adapters – WLAN/Wi-Fi, Bluetooth, Infiniband, etc

Advanced Network settings that are not compatible with NIC Teaming in Windows Server 2016:

- SR-IOV – Single-root I/O virtualization allows data to interact with the network adapter directly, without involving the Windows Server host operating system, thus bypassing this middle layer of communication

- QoS capabilities – QoS policies allow shaping the traffic traversing the NIC team so that traffic can be prioritized

- TCP Chimney – TCP Chimney offloads the entire networking stack directly to the NIC so it is not compatible with NIC Teaming

- 802.1X authentication – 802.1X is generally not supported with NIC teaming and so should not be used together

Configuring a Windows Server 2016 NIC Team

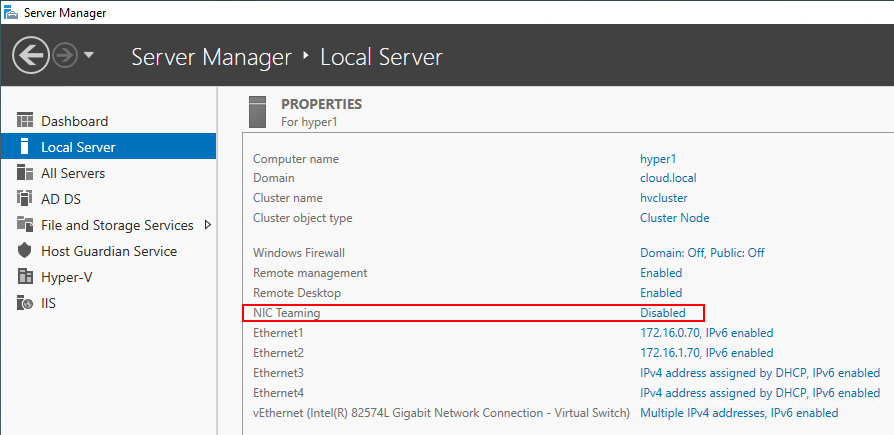

Configuring a NIC Team in Windows Server 2016 is easily accomplished via the Server Manager or with PowerShell. Under the Local Server properties screen, you will see the NIC Teaming section which is set to Disabled by default. Click the disabled hyperlink.

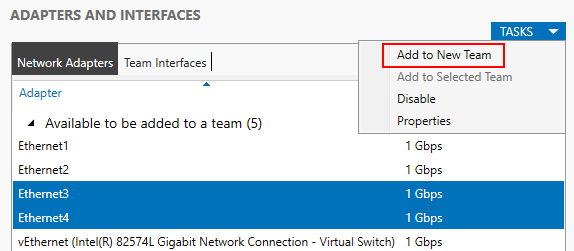

This will launch the NIC Teaming configuration dialog box, allowing the configuration of a new NIC Team. Select the NICs that you want to add to the team and pull down the Tasks dropdown menu to select the Add to New Team option.

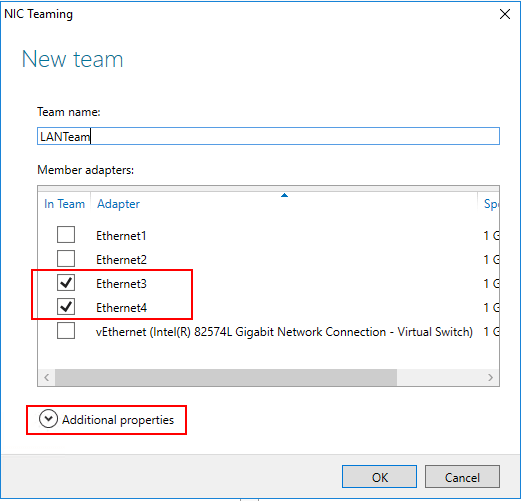

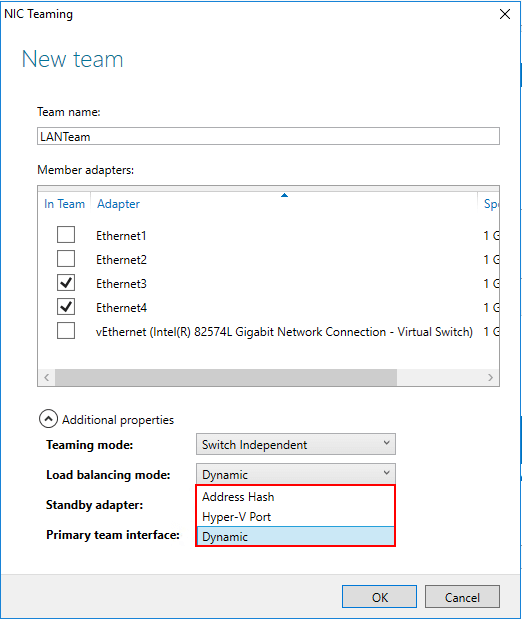

This launches the NIC Teaming dialog box. Here you give the NIC Team a Team name, check or uncheck adapters to be members of the team, and also configure Additional Properties. Click the Additional Properties dropdown menu.

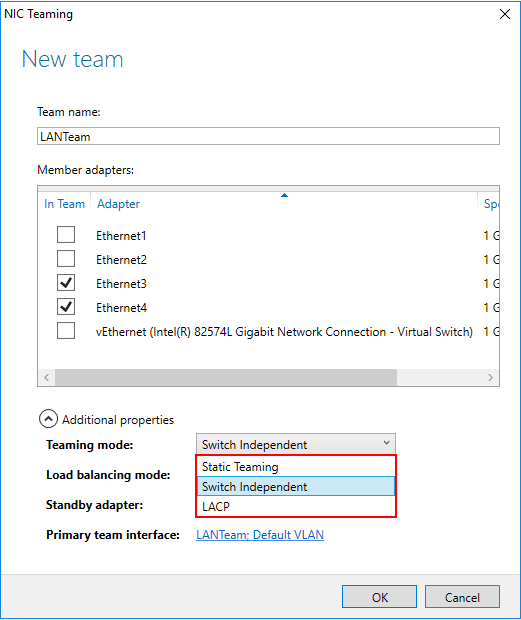

Under the Additional Properties menu, you can select the Teaming mode, Load Balancing mode, and Standby adapter/Primary team interface.

With the different teaming modes, you can control the way the network cards are teamed. The options are as follows:

- Static Teaming – In this configuration, you have to configure the switch and the server to identify which links form the team. Since this is a static configuration involving the switch, it is referred to as switch dependent mode of NIC teaming

- Switch Independent – Allows distributing NICs in the team across numerous network switches in your environment

- LACP – this is an intelligent form of link aggregation that allows failover and dynamic configuration that allows setting up aggregation automatically. It provides intelligent failover of links as well. It does this by sending special PDUs that are transmitted down all the links that have LACP enabled

In the Load Balancing Mode, you have several interesting options here as well:

- Address Hash – Source and destination TCP ports and source and destination IP addresses. The TCP ports hash creates the most granular distribution of traffic streams. With this method, even with the failure of a NIC, in the case of Hyper-V VMs, the VM will still be able to access the network

- Hyper-V Port – With this load balancing mode, traffic is load balanced by the VM. Each VM will communicate on a different NIC. With the failure of a NIC, network communication with a VM will be disrupted

- Dynamic – Uses aspects of each of the other two modes and combines them together into a single mode. Outbound traffic is distributed based on a hash of the TCP ports and IP addresses. Inbound loads are distributed in the same way as Hyper-V port mode

Hyper-V Specific NIC Teaming Considerations

There are a few Hyper-V specific considerations to be aware of when using NIC teaming. One of the first things to consider is VMQ or Virtual Machine Queue. VMQ is a performance-enhancing technology that allows the virtual machine to interact directly with the NIC to transfer network traffic by utilizing the Direct Memory Access (DMA) capabilities in Windows Server.

With Windows Server 2012 and Hyper-V, the converged network model was introduced which in conjunction with NIC teaming can allow placing multiple network services on a single team of NICs. The only exception to the consolidation of traffic is the storage network which is still recommended to be separated from the other types of traffic in a Hyper-V cluster.

- Windows Server NIC teaming is designed to work with VMQ and can be enabled together

- Windows Server NIC Teaming is compatible with Hyper-V Network Virtualization or HNV

- Hyper-V Network Virtualization interacts with the NIC Teaming driver and allows NIC Teaming to distribute the load optimally with HNV

- NIC Teaming is compatible with Live Migration

- NIC Teaming can be used inside of a virtual machine as well. Virtual network adapters must be connected to the external Hyper-V virtual switches only. Any virtual network adapters that are connected to internal or private Hyper-V virtual switches won’t be able to communicate to the switch when teamed and network connectivity will fail

- Windows Server 2016 NIC Teaming supports teams with two members in VMs. Larger teams are not supported with VMs

- Hyper-V Port load-balancing provides a greater return on performance when the ratio of virtual network adapters to physical adapters increases

- A virtual network adapter will not exceed the speed of a single physical adapter when using Hyper-V port load-balancing

- If Hyper-V ports algorithm is used with Switch Independent teaming mode, the virtual switch can register the MAC addresses of the virtual adapters on separate physical adapters which statically balances incoming traffic

Concluding Thoughts

Windows Server and Hyper-V Clusters can take advantages of the natively built-in network aggregation features by way of NIC teaming. With NIC teaming, multiple physical adapters can be combined to provide the fabric for a software-defined network adapter. Using the new converged networking model with Hyper-V allows aggregating network traffic from the various Hyper-V cluster required networks onto a single NIC team. There are quite a few options that administrators can take advantage of that control how the actual team is formed as well as the load-balancing algorithm that is used to load balance traffic. Each of these types of configurations has strengths and weaknesses, especially as relates to Hyper-V virtual machines. Keep in mind, there are certain advanced network technologies such as SR-IOV and 802.1X that are not compatible with NIC teaming. Be sure to take advantage of the built-in Windows NIC teaming feature that is a new feature starting with Windows Server 2012 that proves to be much less problematic that third-party drivers and is fully supported by Microsoft.

Ensure the security of your essential data through a reliable Hyper-V backup solution, which acts as a robust shield, safeguarding the integrity of your vital information. Experience its prowess at no cost!

Easily strengthen your Hyper-V environment to achieve peace of mind by downloading BDRSuite today.

Immerse yourself in the realm of Hyper-V backup with BDRSuite, and witness its remarkable efficiency firsthand.

Related Posts:

Hyper-V Networking Configuration Best Practices

Best Practices for Hyper-V storage and Network configuration

Windows Server Hyper-V Cluster Networks

Hyper-V Virtual Switch Part 1 : Overview

Beginner’s Guide for Microsoft Hyper-V: How to Create Hyper-V Cluster – Part 15

Hyper-V Mastery: A Step-by-Step Guide for Beginners to Elevate Your IT Skills and Boost Your Career

Follow our Twitter and Facebook feeds for new releases, updates, insightful posts and more.